One of the nicest oldschool effects are the 2D LUT deformations and tunnels. These were very fast to compute in realtime, in software (without GPUs), and very spectacular too, so they were very common back in the late 1990s when I first learnt about them. The idea is to deform a textuer by some mathematical function. But because evaluating mathematical functions for every pixel in the screen was impossible back then, a texture was used instead as a LUT (Look Up Table). That way it was possible to create animated flowers, fake 3d landscapes, tunnels, whirlpools, holes, etc. As I said, I used them a lot during 1999 and 2000 myself, you can see them in action in demos like Rare or Storm.

Today you can do the same effect very easily by using pixel shaders, and with much less effort at the same quality, since the GPU will do lot of mipmapping and bilinear filtering, that otherwise you have to do manually.

In either case the idea is to take the screen buffer, and map a texture to it. For example, a trivial way to display such a texture would be to do something like this:

where I assume the texture is of 512 x 512 pixels, and the screen is mXres x mYres pixels. The texture will repeat over the screen as needed, thank to the AND operations. This code just copies one texel to one pixel. The same code in a pixel shader (GLSL) would look like this:

Now that we have our texture displayed on screen we can start coding the real effect. The trick is to modify the texture coordinates (i,j) in the software rendering version or gl_TexCoord[0].xy in the GLSL version with crazy formulas to get nice deformations. Instead of adding lots of calculations to our code, we will precompute the formulas in some look up table (LUT). That means that we will input the coordinates to the LUT and the LUT will give us some new coordinates back. Something like this:

for software rendering, and like this for GLSL:

Adding a time factor to the texture coordinates is done so that the texture moves. It's now up to us to be imaginative and fill the LUT with interesting things. For example,

will produce an interesting deformation for the software rendering version. As shown in the code the LUT stores 16 bit integer values per channel. If we were implementing the effect in GLSL, we better use a floating point texture for the LUT (float16 is more than enough, we don't need a full 32 bit floating point precision), and the code to fill the texture would be

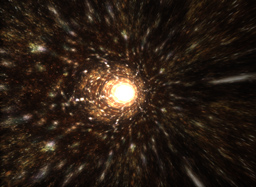

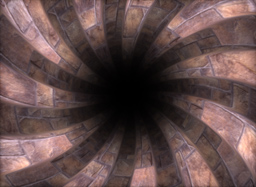

It's lots of fun to invent as many formulas as you can. All the images on this article were made with this simple algorithm, by using the following formulas:

Note that GPU shaders are very powerful these days, and you don't actually need LUTs anymore, you can do all the calculations per pixel and include the time factor really inside the formulas. In fact, you can see some of the formulas above implemented as realtime shaders here:

https://www.shadertoy.com/view/Ms2SWW

https://www.shadertoy.com/view/Xsf3Rn

https://www.shadertoy.com/view/XdXGzn

https://www.shadertoy.com/view/Xdf3Rn

https://www.shadertoy.com/view/4sXGzn

https://www.shadertoy.com/view/4sXGRn

Today you can do the same effect very easily by using pixel shaders, and with much less effort at the same quality, since the GPU will do lot of mipmapping and bilinear filtering, that otherwise you have to do manually.

In either case the idea is to take the screen buffer, and map a texture to it. For example, a trivial way to display such a texture would be to do something like this:

void renderDeformation( int *buffer, float time )

{

for( int j=0; j<mYres; j++ )

for( int i=0; i<mXres; i++ )

buffer[mXres*j+i] = mTexture[ 512*(j&511) + (i&511) ];

}

where I assume the texture is of 512 x 512 pixels, and the screen is mXres x mYres pixels. The texture will repeat over the screen as needed, thank to the AND operations. This code just copies one texel to one pixel. The same code in a pixel shader (GLSL) would look like this:

uniform sampler2D texCol;

void main( void )

{

gl_FragColor = texture2D( texCol, gl_TexCoord[0].xy );

}

Now that we have our texture displayed on screen we can start coding the real effect. The trick is to modify the texture coordinates (i,j) in the software rendering version or gl_TexCoord[0].xy in the GLSL version with crazy formulas to get nice deformations. Instead of adding lots of calculations to our code, we will precompute the formulas in some look up table (LUT). That means that we will input the coordinates to the LUT and the LUT will give us some new coordinates back. Something like this:

void renderDeformation( int *buffer, float time )

{

const int itime = (int)(10.0f*time);

for( int j=0; j<mYres; j++ )

for( int i=0; i<mXres; i++ )

{

const int o = xres*j + i;

const int u = mLUT[ 2*o+0 ] + itime;

const int v = mLUT[ 2*o+1 ] + itime;

buffer[mXres*j+i] = mTexture[ 512*(v&511) + (u&511) ];

}

}

for software rendering, and like this for GLSL:

uniform float time;

uniform sampler2D texCol;

uniform sampler2D texLUT;

void main( void )

{

vec4 uv = texture2D( texLUT, gl_TexCoord[0].xy );

vec4 co = texture2D( texCol, uv.xy + vec2(time) );

gl_FragColor = co;

}

Adding a time factor to the texture coordinates is done so that the texture moves. It's now up to us to be imaginative and fill the LUT with interesting things. For example,

void createLUT( void )

{

int k = 0;

for( int j=0; j<mYres; j++ )

for( int i=0; i<mXres; i++ )

{

const float x = -1.00f + 2.00f*(float)i/(float)mXres;

const float y = -1.00f + 2.00f*(float)j/(float)mYres;

const float d = sqrtf( x*x + y*y );

const float a = atan2f( y, x );

// magic formulas here

float u = cosf( a )/d;

float v = sinf( a )/d;

mLUT[k++] = ((int)(512.0f*u)) & 511;

mLUT[k++] = ((int)(512.0f*v)) & 511;

}

}

will produce an interesting deformation for the software rendering version. As shown in the code the LUT stores 16 bit integer values per channel. If we were implementing the effect in GLSL, we better use a floating point texture for the LUT (float16 is more than enough, we don't need a full 32 bit floating point precision), and the code to fill the texture would be

void createLUT( float *lutTexture )

{

for( int j=0; j<mYres; j++ )

for( int i=0; i<mXres; i++ )

{

const float x = -1.00f + 2.00f*(float)i/(float)mXres;

const float y = -1.00f + 2.00f*(float)j/(float)mYres;

const float d = sqrtf( x*x + y*y );

const float a = atan2f( y, x );

// magic formulas here

float u = cosf( a )/d;

float v = sinf( a )/d;

*lutTexture++ = fmodf( u, 1.0f );

*lutTexture++ = fmodf( v, 1.0f );

}

}

It's lots of fun to invent as many formulas as you can. All the images on this article were made with this simple algorithm, by using the following formulas:

u = x*cos(2*r) - y*sin(2*r)

v = y*cos(2*r) + x*sin(2*r)

v = y*cos(2*r) + x*sin(2*r)

u = 0.3/(r+0.5*x)

v = 3*a/pi

v = 3*a/pi

u = 0.02*y+0.03*cos(a*3)/r

v = 0.02*x+0.03*sin(a*3)/r

v = 0.02*x+0.03*sin(a*3)/r

u = 0.1*x/(0.11+r*0.5)

v = 0.1*y/(0.11+r*0.5)

v = 0.1*y/(0.11+r*0.5)

u = 0.5*a/pi

v = sin(7*r)

v = sin(7*r)

u = r*cos(a+r)

v = r*sin(a+r)

v = r*sin(a+r)

u = 1/(r+0.5+0.5*sin(5*a))

v = a*3/pi

v = a*3/pi

u = x/abs(y)

v = 1/abs(y)

v = 1/abs(y)

Note that GPU shaders are very powerful these days, and you don't actually need LUTs anymore, you can do all the calculations per pixel and include the time factor really inside the formulas. In fact, you can see some of the formulas above implemented as realtime shaders here:

https://www.shadertoy.com/view/Ms2SWW

https://www.shadertoy.com/view/Xsf3Rn

https://www.shadertoy.com/view/XdXGzn

https://www.shadertoy.com/view/Xdf3Rn

https://www.shadertoy.com/view/4sXGzn

https://www.shadertoy.com/view/4sXGRn