This page is a bit of a legacy to my experiment in 2005 to use the back-then-state-of-the-art techniques fore realtim raytracing. These were the times of multithreaded SSE2 based packet tracers, SAH based Kd-Tree structures, and 10 million rays per second performances with compelx modesls (for tens to hundreds of millions of polygons). And while these days building and traversing acceleration structures happens in software, I'm leaving this article for historical reference.

It started as a personal project, and then turned into a professional one. I pretty much followed Ingo Wald's PhD paper, and soon enough I got comparable results with our billion polyugon CAD models, in 32 core Windows machines. I was proud of my work and soon added my own tricks, APIs and features to it. The main issue of course was memory coherency, so mostly primary rays and simple shadows were used, although short-distance and noisy ambient occlusion was doable with proper denoising (in a different buffer to prevent blurring the rest of the illimination and albedo textures). Anyways, here go some screenshots and videos I took with this raytracer in 2005 on small models, since the gigantic CAD models I was actually using were under NDA):

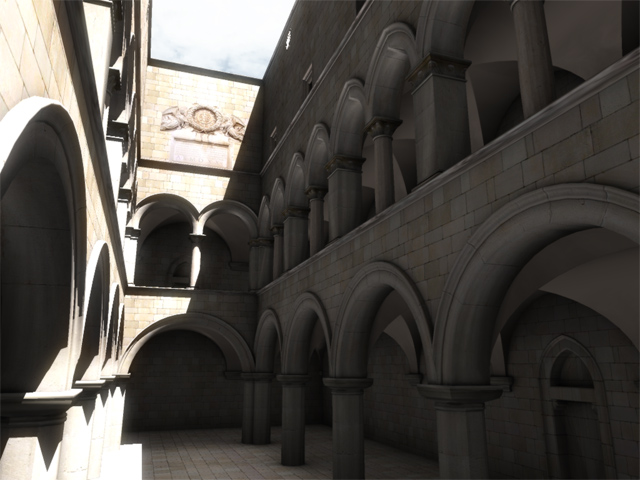

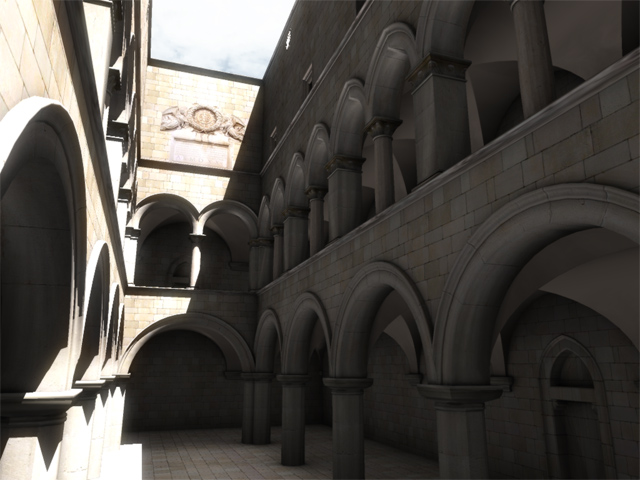

Realtime raytracing (2005)

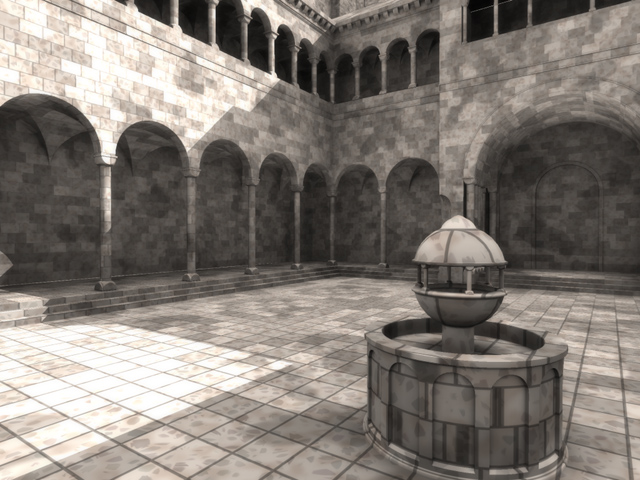

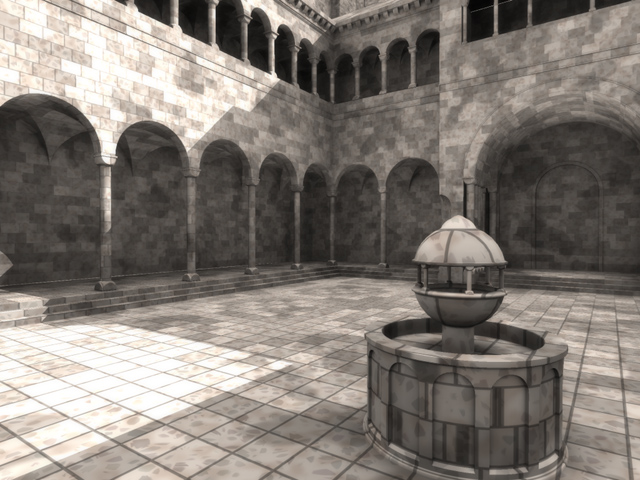

Ambient occlusion on the Atrium (2005)

Realtime raytracing (2005)

Ambient occlusion, 3 minutes to render (2005)

Ambient occlusion, 3 minutes to render (2005)

Ambient occlusion, 5 minutes to render

Ambient occlusion on the Atrium

It started as a personal project, and then turned into a professional one. I pretty much followed Ingo Wald's PhD paper, and soon enough I got comparable results with our billion polyugon CAD models, in 32 core Windows machines. I was proud of my work and soon added my own tricks, APIs and features to it. The main issue of course was memory coherency, so mostly primary rays and simple shadows were used, although short-distance and noisy ambient occlusion was doable with proper denoising (in a different buffer to prevent blurring the rest of the illimination and albedo textures). Anyways, here go some screenshots and videos I took with this raytracer in 2005 on small models, since the gigantic CAD models I was actually using were under NDA):

Realtime raytracing (2005), 5 millions polygons

Realtime raytracing (2005)

Ambient occlusion on the Atrium (2005)

Realtime raytracing (2005)

Ambient occlusion, 3 minutes to render (2005)

Ambient occlusion, 3 minutes to render (2005)

Ambient occlusion, 5 minutes to render

Ambient occlusion on the Atrium